Chat with Local Free LLMs in Zotero

Introduction

Now open source large language models(LLMs) are developing rapidly. Although they are not as good as the paid commercial LLMs, some open source LLMs are sufficient to a certain extent in the scenarios of automatic summarization, writing article reviews and other auxiliary reading of papers. And they are completely free forever. All you need is your personal computer and sufficient power supply. Now PapersGPT has supported the seamless running local LLMs in Zotero, whether Windows or Mac platform, it can be easily run.

One click running free LLMs in Zotero

Initialize environment

When you install and start PapersGPT(at least v0.2.0) for the first time, The system will take some time to automatically initialize the dependent libraries and installation packages required for running local LLMs. Please ensure that the network is good and can connect to GitHub and Huggingface. This process runs automatically in the background, and users do not need to worry about any tedious manual environment configuration and installation. In some system environments on Windows, the firewall may prompt that there are risks. Please grant relevant permissions to ensure the smooth progress of the installation process.

Choose the model which you like

When the environment of running LLMs is initialized, the Local LLM option will appear in PapersGPT, and it will be configured according to the local machine environment, with built-in open source LLMs of matching sizes. The supported local free models are shown in the following table.

| Provider | Supported Models |

|---|---|

| OpenAI | gpt-oss-20b |

| gemma-3-12b | gemma-3-4b | gemma-3-1b | gemma-3n-e4b | |

| Qwen | qwen-3-8b | qwen-3-4b | qwen-3-1.7b |

| DeepSeek | deepseek-distill-llama | deepseek-distill-llama-small | deepseek-0528-distill-qwen3 | deepseek-0528-distill-qwen3-small | deepseek-distll-qwen-1.5b |

| Microsoft | phi-4 | phi-4-mini-reasoning |

| Mistral | mistral-7b | mistral-7b-small |

| Llama | llama-3.1-8b | llama-3.1-8b-small |

Please note that due to the limitation of the GPU memory on your PC or laptop, not all models will be displayed. Only the models whose size are smaller than your GPU memory could be displayed. Once the LLMs are displayed for choosing, they can be safely run on your local machine's GPU and will be prioritized to run on your local the largest GPU card.

Models downloading

After selecting a specific model, such as gemma 3 4b, the model will be automatically downloaded from Huggingface to the local computer. Since LLMs are generally large, they usually take some time to download. The download progress is based on the display on PapersGPT. After the model is downloaded, the background will automatically load and start the local LLM inference service.

Chat with local LLMs

Chat with local LLMs when reading papers. You can read a single paper or multiple papers together. For example, you can generate a literature review based on multiple related papers in Zotero. It is very convenient and easy to use.

Important Notes

PapersGPT agent may be mistakenly defined as a Trojan or virus on Windows. The related agents of papersgpt may be mistakenly defined as a Trojan or virus by Microsoft Defender or other anti-virus software. In this case, you may see some abnormal phenomenon:

"Local LLM" item in the left side of PapersGPT does not appear. Local models can't be downloaded to your computer. Local models can't provide chatting service for you.

So if you want to use the Local LLMs on your Windows, please allow the safe papersgpt agents to run on your device in Microsoft Defender or anti-virus software.

Don't chat with local LLMs in power save mode

When chatting pdf with local LLMs make sure your computer is not working in low power mode, power save mode or such kinds of mode. That's because chatting PDFs with local LLMs need a lot of computation by the GPU, the mode like power save will affect the performance of the computation and make PapersGPT reply slow.

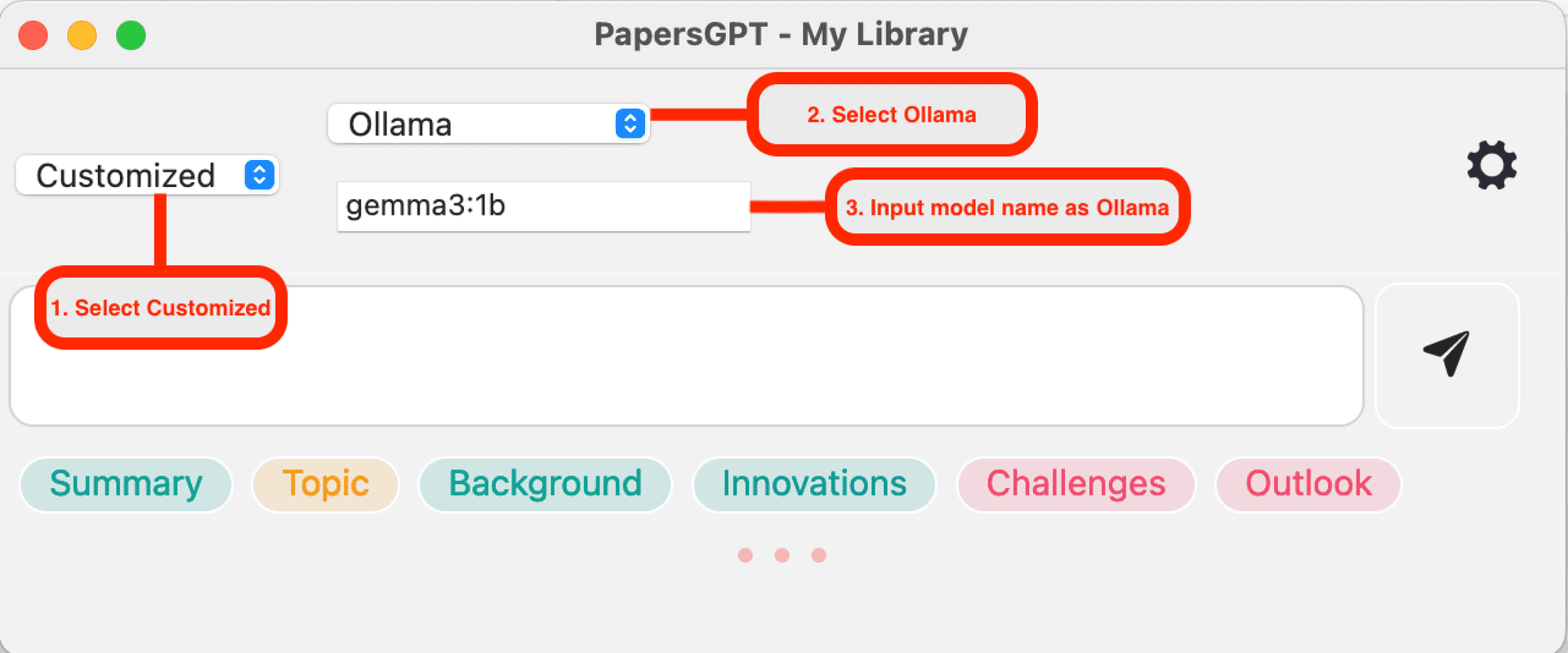

Compatible with Ollama

If you are used to using Ollama APP to start the local LLM service, you can just input the model name which is the same as Ollama in PapersGPT, such as 'gemma3:1b'